Python 多執行緒 (multiprocessing)

multiprocessing 筆記

Process

導入套件與進程 function

1 | import time |

執行多進程

1 | # 建立 Process |

打印結果

1 | Start Process |

Pool 進程池

導入套件與進程 function

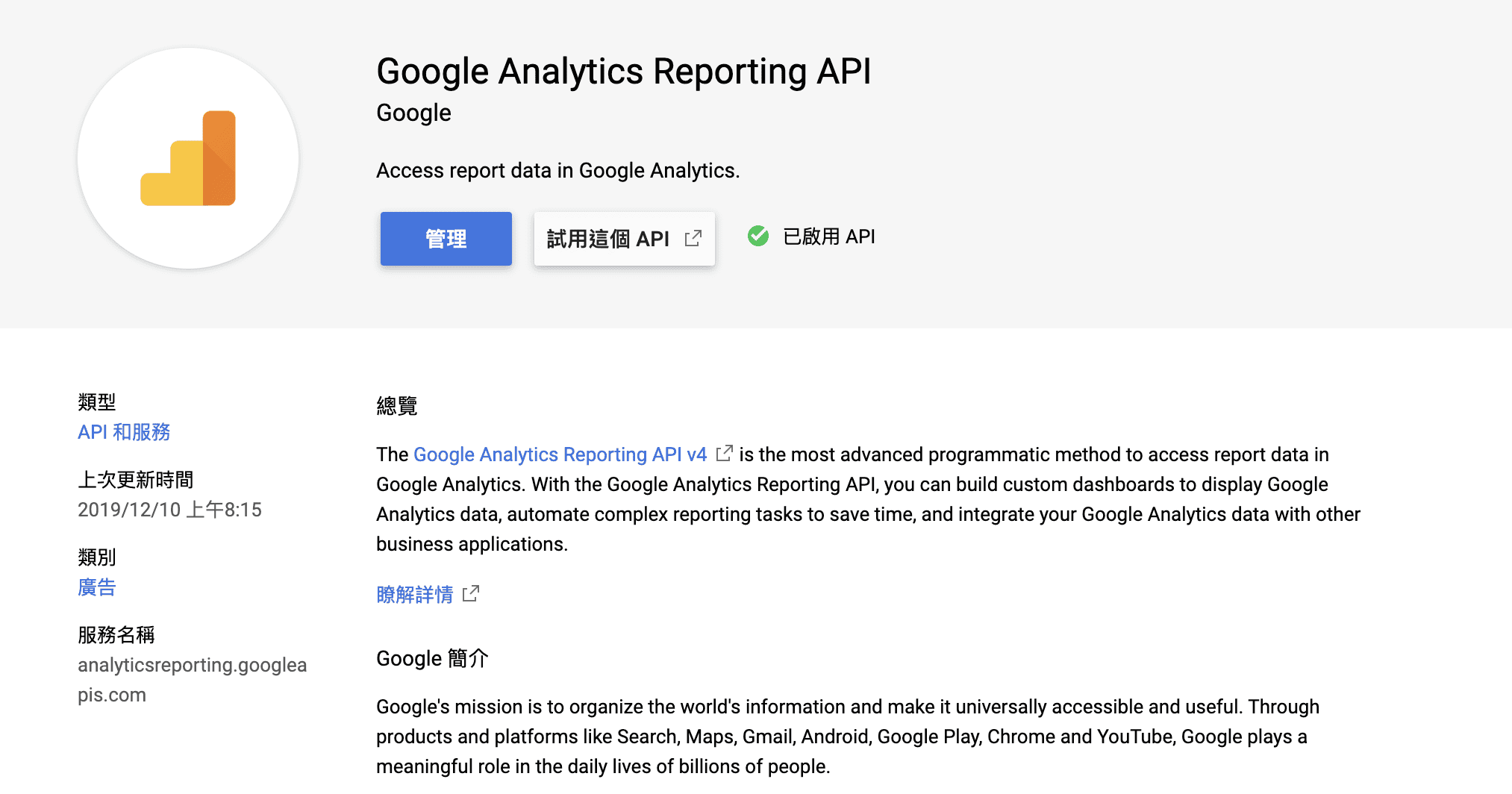

使用多進程爬 Google 新聞前五則

1 | import requests |

新聞主題

1 | google_news_topic = [ |

執行多進程

pool.map

1 | # processes 預設使用電腦核心數 |

pool.apply_sync

1 | pool = mp.Pool(processes=4) |

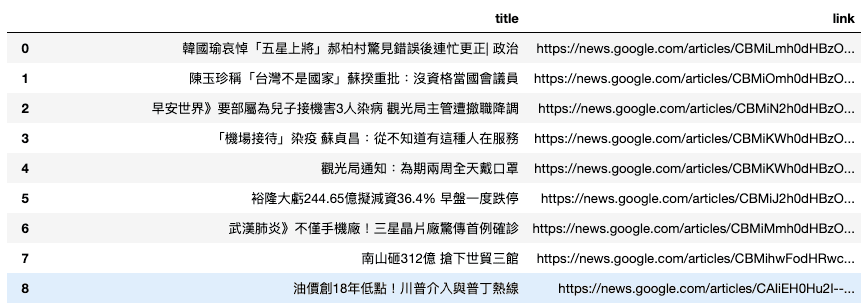

打印結果

在使用上兩者結果是一樣的

本部落格所有文章除特別聲明外,均採用CC BY-NC-SA 4.0 授權協議。轉載請註明來源 隨勛所欲!

評論